In a LinkedIn comment from Saleem Syed:

As a QA engineer, my goal now is to stay hands-on rather than just informed. Looking for structured ways to:

* Apply AI in real testing scenarios

* Build repeatable workflows

* Keep up without getting overwhelmed

I'll take a stab at answering the first way. When it comes to applying AI in real testing scenarios, I think it's important to break down where to utilize AI. If there is a test that you run every release cycle that doesn't have an automated test, this is a great candidate. AI shines at writing code... (both good and bad code). With the right skill or prompt you can get great code. The workflow looks like this utilizing a existing test automation framework utilizing Claude Code (though any similar agentic coding tool will work).

1. Enter plan mode, provide your LLM 1-3 test scenarios with step by step instructions ask it not to write any code but to create a plan for how it will tackle the work and break it down into 3 additional sections, 2) creation of test data/state of the app 3) creating page objects and 4) writing the tests

2. Based on the plan proceed with step 2 creating any test data or setting up the state of the test (example if the user needs to be authenticated or not.

3. Have the agentic tool utilize playwright-cli (be sure this is installed along with the skill before running) to visit the webpage and explore the application following the steps in the test plan. prompt with instructions to utilize the page object model to create page object classes for any elements that the test will need to utilize.

4. Have the agentic tool write the test utilizing the page object class.

Following this pattern should yield good results getting you 80-90% the way there towards a reusable flow that you can further customize and tailor towards your team.

Headlines & Launches

The Future QA Customer Is the AI, Not the Tester

via LinkedIn (Jason Huggins)

Selenium creator Jason Huggins makes a pointed argument: the next wave of app builders - vibe coders who won't know or care about Playwright vs Selenium - just want things to work. The real competition for testing tools isn't other frameworks; it's becoming the default choice for the AI systems building apps on their behalf.

Claude Code Auto-Fix: Background PR Fixes in the Cloud

via X (Lydia Hallie / Anthropic)

Claude Code now auto-fixes PRs in the background - turn on the setting and it will follow your PRs remotely, fixing CI failures and addressing review comments while you do something else. Works on web, mobile, or by pasting any PR URL. Walk away and come back to a green PR.

When AI Generates Both the Code and the Tests, It Just Makes It Pass

via LinkedIn (Zane Rappa)

A tester's warning about vibe coding: when AI writes the code and writes the tests, its only goal is green. That's not validation - that's simulation. Rappa has seen production systems pushed in a "passing" state while broken underneath, and teams told to stop reading the code entirely. Delivery metrics look great. Quality erodes.

An AI Agent Fixed 13 Flaky Tests Overnight While I Slept

via X (Gianfranco Piana / Gumroad)

Gumroad pointed their OpenClaw-based AI assistant at their test suite and walked away. One week later: 206 commits, 94 CI runs, 13 merged PRs fixing flaky tests - race conditions, timing issues, browser corruption. The agent's killer feature: an ideas backlog that forces it to write down failed approaches so it never repeats the same mistake. The best find wasn't even a flaky test - it was a real bug in file ID remapping. Open source: openclaw-autoresearch.

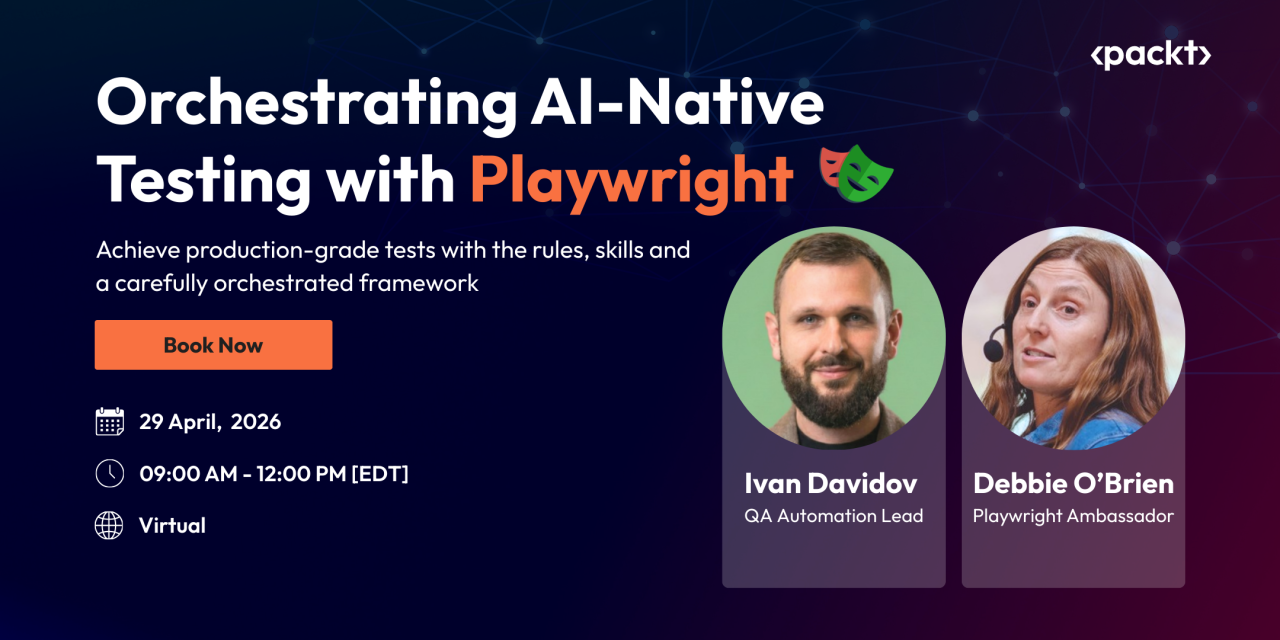

If you’re working with Playwright, test automation, or exploring how AI fits into your workflow, this should be worth your time. You can also grab your seat with an exclusive 40% early bird discount using code: BUTCH40

Tools & Frameworks

AppClaw: Agentic Mobile Testing Built on Appium MCP

via LinkedIn (TestMu AI / Sai Krishna)

AppClaw sits on top of appium-mcp as the reasoning layer - give it a goal in plain English and it perceives the screen, reasons through state, and acts. Vision mode used 70% fewer tokens than DOM-based runs (7k vs 23k). Early project, but a compelling direction for agentic mobile testing.

Expect: Let Agents Test Your Code in a Real Browser

via X (Aiden Bai)

Open-source CLI and agent skill for agentic browser testing - run Claude Code or Codex against your app, get a video of every bug found, fix and repeat until passing. Drop in as an agent skill or run standalone from the CLI.

Alumnium Is SOTA on WebVoyager: 98.5% with Claude Code and Selenium

via alumnium.ai (Alex Rodionov / Airbnb)

New state of the art on WebVoyager using Selenium and accessibility trees - no screenshots, no custom browser stack. General-purpose agents (Claude Code + MCP) outperformed isolated "black box" agents. 610 real-world tasks completed for $5 total. The era of expensive custom browser agents may be ending.

Techniques & Tutorials

QA in the AI Age: Real-World Principles from the Field

via LinkedIn (Ben Fellows / Loop Software & Testing)

Ben Fellows shares principles from live client engagements: start from code not just UI/API, add your own test IDs for testability, use AI to keep up with AI-generated code volume, run multiple Claude Code instances in parallel, and use manifest files with definitions of done to stop AI claiming tasks are finished when they aren't. A preview of a formal methodology he's pulling together.

Fixing Playwright Tests with AI: What Prompts Need to Actually Work

via Currents

A practical breakdown of what it takes to fix flaky and broken Playwright tests using AI - what context to include, how to structure failure info, and what separates a fix that sticks from one that just moves the problem somewhere else.

The Honest QA Engineer's Guide to an Agentic Pipeline with Playwright MCP and Claude Code

via QA Foundation (Michelle Cortes-Advincula)

Nine months of real experimentation, not a demo. Michelle evaluated Playwright MCP and Claude Code against actual pain points - maintenance burden, script completion speed, skills gaps, ROI. MCP is impressive on simple scenarios; locator strategies still matter when complexity rises. A full architecture she can now consistently run.

Falling behind on test automation and AI adoption? DevClarity's QA Practice gets your team up to speed fast - with hands-on training, proven workflows, and measurable results within 30 days.

Research & Data

AI and Testing: Local LLMs and LangChain (Part 3 of series)

via Tester Stories (Jeff Nyman)

Part 3 of Jeff Nyman's AI and Testing series: using a local LLM from Ollama and introducing LangChain as the orchestration layer. Covers two key testability properties in this context and how LangChain's composable pipelines change how you think about testing AI applications.

Testing in the AI Era

via Tech League Podcast, ep. 17 (Alan Richardson / Evil Tester)

Alan Richardson brings 30 years of testing perspective to the AI moment. His argument: architecture-first development is the key to getting good test output from AI agents - good code structure leads to good tests, not the other way around. He also makes the case that self-healing tests are a red flag (the tool is hiding instability, not fixing it), that TDD mostly doesn't work ⁉️ with current AI tooling, and that domain expertise becomes more valuable - not less - when everyone has access to the same generalist tools.

Quick Links

How GenAI Works - Harvard Kennedy School Generative AI Course (Class 1) (Harvard Kennedy School) - free public course covering LLM foundations, alignment, and real-world implications

README Wizard: Building an Agent Skill for Consistent Docs (Debbie O'Brien) - every good repo deserves a useful README; this skill makes it repeatable

How Does Anthropic Do QA So Fast? (r/ClaudeAI) - the question most QA engineers are quietly asking; the comments are worth a read

Playwright Agents Won't Replace Senior SDETs - They Make Them Essential (LinkedIn (Igor Dorovskikh))

How I Use AI as an SDET: Code Generation and Test Analysis (Tricentis ShiftSync)

If this resonates with you, chances are it'll resonate with someone on your team too. Sometimes the best thing you can do for a colleague is share something you learned that just might make their day a little easier.