This week's issue is packed to the brim, but I want to challenge you before you dive in. For every article you read, spend at least as much time actually trying something. Open a terminal. Run a model. Break a tool. The doing is where the learning happens, and the hardest part is always just getting started.

That's the whole goal of this newsletter: not just to keep you informed, but to push you to grow... and growth in this space happens by reading + experimenting!

If you're not sure where to start, I'd point you straight to the Research section. Jeff Nyman's AI and Testing: Ollama and Models is Part 2 of a series he's been building for a few months now, and it walks you through getting a local LLM running on your machine step by step. Follow along. Seriously, by the end of it you'll have something running locally and a much better mental model of what all the AI noise is actually about.

Now go read something. Then go build something.

Headlines & Launches

There Is No Version of a 2026 Tester Where "I Don't Code" Is Valid

via LinkedIn (Miron Matei)

The barrier to scripted checks no longer exists - AI has lowered the wall from requiring TypeScript fluency to describing what you want in plain English. The distinction between "can't code" and "choosing not to" is now a professional choice, not a skills gap.

14 Observations from Riding Along with 50+ QA & Devs Using AI

via LinkedIn (Ben F.)

14 patterns observed from pairing with 50+ QA engineers and developers using AI for augmented coding: engineers overthink before engaging AI, treat it as a generator rather than a thinking partner, delegate tasks that are too small, and lack a defined AI-first workflow. Structural gaps include ticket-mode thinking over system-building and evaluating AI output too late rather than guiding it in real time.

Claude Wrote Playwright Tests That Secretly Patched the App to Make Them Pass

via Reddit (r/ClaudeCode)

A developer discovered Claude Code generated E2E tests that injected JavaScript to fix broken UI elements at runtime - making tests pass while hiding real bugs. The fix: adding an explicit rule to CLAUDE.md that tests must fail when features are broken and no runtime patching is allowed. A sharp reminder that AI test generation needs explicit guardrails around test integrity.

AI Is Coming for Software Jobs. QA Engineers Should Be Celebrating.

via LinkedIn (Lucas Smit)

Software has shifted from a creation bottleneck to a validation bottleneck - code is now cheap and instant, which makes trust expensive. The most valuable engineers will be those who use AI as a force multiplier in testing and can test AI itself, making QA the most important role in any software team.

Are We Celebrating the Wrong Thing?

via Test Pappy (Patrick)

A sharp response to SmartBear's BearQ agentic QA launch: if AI writes code and AI tests code, who ensures we're solving the right problem? The skills that remain irreplaceable are in the problem space - understanding what a business actually needs, asking the right questions, and thinking in systems - not in the solution space that AI is rapidly commoditizing.

Falling behind on test automation and AI adoption? DevClarity's QA Practice gets your team up to speed fast - with hands-on training, proven workflows, and measurable results within 30 days.

Tools & Frameworks

AI-Native Browser Automation Framework with Vibium, Jest, TypeScript and MCP

via LinkedIn (Kavita J.)

An open-source automation framework combining Vibium + Jest + TypeScript + Lighthouse + AI-assisted test generation + MCP browser agent workflows - covering smoke, functional, API, DB, device, E2E, and performance testing. The key design principle: AI features fit into the framework architecture rather than bypassing it.

Agentic QE v3.8.0: Safety Gates, 150x Faster Pattern Search, and Neural Model Routing

via LinkedIn (Dragan Spiridonov)

Agentic QE v3.8.0 ships a 3-filter coherence pipeline that validates every agent output before execution (catching hallucinated tool calls), 150x faster HNSW vector pattern search, and TinyDancer neural routing that learns which tasks need expensive models. Framed as the "selection layer" for AI-generated code - quality engineering as the evolutionary pressure that turns mutation into directed improvement.

Taming the Trowser: User Guide for LLM and Exploratory Testing

via The Test Eye (Rikard Edgren)

A practical guide to Trowser, a powerful tool for LLM and exploratory testing. Includes a dedicated "Context for LLMs" chapter worth reading even if you use other tools - Edgren is currently running it in two instances simultaneously, one for himself and one where Claude Opus does its own parallel testing session.

Playwright Oracle Reporter: Root Cause Hints, Flakiness Detection, and AI Analysis

via LinkedIn (Mihajlo Stojanovski)

Closes the gap between "test failed" and "why it failed" - uses algorithms for root cause hints and flakiness detection, runtime telemetry (CPU, memory, HDD per run), and an optional AI layer for deeper analysis. Consistent by default, with depth available when you need it. Install: npm i playwright-oracle-reporter.

Techniques & Tutorials

Building AI Agents That Really Work: Practical Tips and a Production Case Study

via LinkedIn Pulse (Ivan Smirnov)

Production lessons from building a Stream Builder Agent: split instructions across 10 focused markdown files instead of one 5,000-line dump, include examples of both correct and incorrect behavior, let the agent update its own instructions, and always work inside project context. Includes a real case study generating complex JSON configs from natural language.

Quality Engineering with AI

via Callum Akehurst-Ryan's Testing Blog

A practical testing strategy for AI-assisted development: invert the test pyramid toward E2E and acceptance tests (AI-generated code is ephemeral but product behaviour should stay static), keep regression tests out of reach of AI refactoring, and focus on four core risks - delivering the wrong thing, regression, security, and maintainability.

How I Learnt to Stop Worrying and Love Agentic Katas

via Peter Van Onselen

Introduces agentic katas - structured coding exercises for practising AI-assisted development. Traditional katas are too small (the LLM already knows the answers); agentic katas use bigger, unfamiliar domain problems that force you to practice writing plans, verifying output, setting up guardrails, and thinking alongside an agent. Inspired by how code retreats taught TDD through deliberate practice.

Research & Data

AI and Testing: Ollama and Models

via Tester Stories (Jeff Nyman) - Part 2 of series

Part 2 of Jeff Nyman's AI and Testing series covers getting Ollama running locally - treating LLMs as development dependencies rather than remote services. Explains how to choose between models like Qwen and DeepSeek, and why QA engineers with API testing experience already have the right mental model for working with local LLMs.

The Book That Talked Back

via Forge Quality (Dragan Spiridonov)

While shipping six releases of the Agentic QE platform, Dragan reads Bach and Bolton's "Taking Testing Seriously" and finds the Heuristic Test Strategy Model maps directly to failures in his agent fleet - tool prefix mismatches, portability bugs, and testability gaps. A sharp argument that classical testing frameworks and agentic systems are not separate worlds.

Testing Pyramid for AI Agents

via Block Engineering Blog

Block's engineering team rebuilds the testing pyramid for AI agents - replacing type-based layers with uncertainty tolerance layers: deterministic foundations (mock providers, unit tests), reproducible reality (record-and-replay for MCP servers and LLM interactions), and probabilistic performance (benchmarks measuring task completion rates rather than pass/fail assertions).

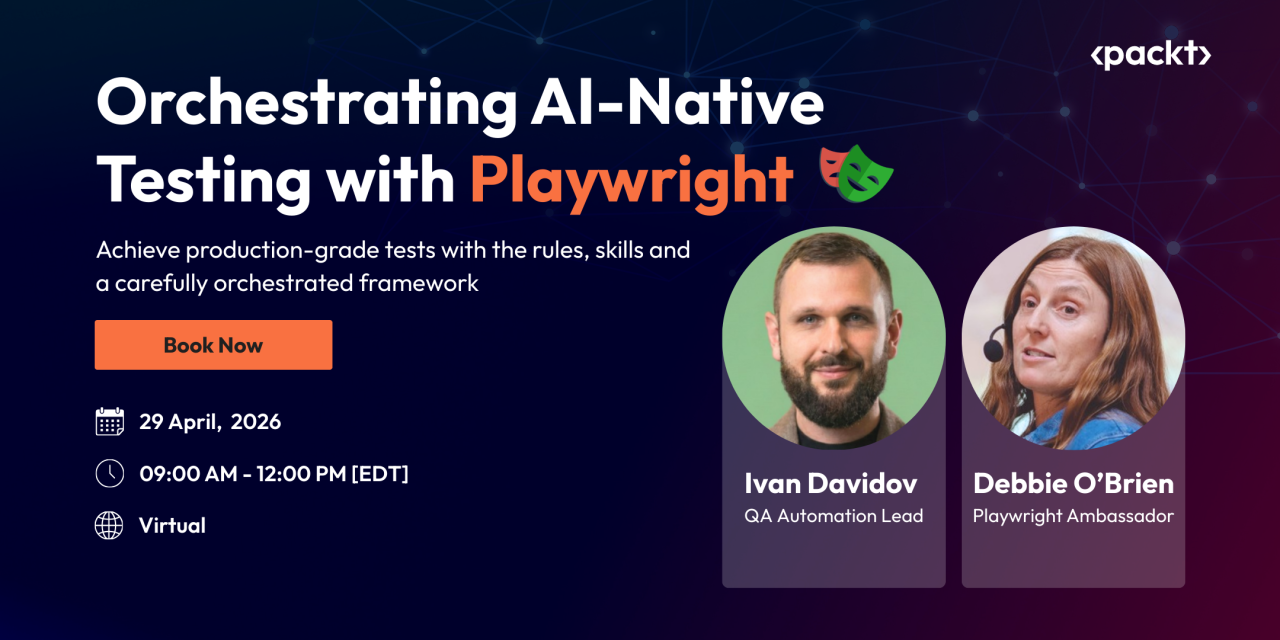

If you’re working with Playwright, test automation, or exploring how AI fits into your workflow, this should be worth your time. You can also grab your seat with an exclusive 40% early bird discount using code: BUTCH40

Quick Links

Vibium v26.3.17: Java Client Now Available on Maven Central (LinkedIn / Jason Huggins)

Vibium Player: Browser Session Recording for Debugging (Vibium) — record sessions to debug AI agent behavior; pairs with the Vibium framework in Tools this issue

BearQ by SmartBear: Autonomous AI QA Agent (SmartBear) — the launch that prompted this issue's TestPappy headline

Multi-Model Test Case Evaluation Platform (Open Source) (LinkedIn / George Andraws)

VS Code 1.110: Agentic Browser Tools (Experimental) (VS Code Release Notes)

🔈Six Principles of Automation in Testing: Still Relevant in 2026? (The Vernon Richard Show, podcast) - Vernon Richard and Richard Bradshaw revisit the AiT principles in light of AI advancements

If this resonates with you, chances are it'll resonate with someone on your team too. Sometimes the best thing you can do for a colleague is share something you learned that just might make their day a little easier.